|

The whole procedure is automated and has less chance of error. You can use this data to generate website content, set product summaries, or obtain eCommerce information like item rates and also shipping costs. You can easily scuff information with Google Sheet without finding out to program. How Do you Protect an API from Scraping? - Security Boulevard

How Do you Protect an API from Scraping?. Posted: Mon, 19 Sep 2022 07:00:00 GMT [source] Some web pages have information that's concealed behind a login. That indicates you'll need an account to be able to scratch anything from the web page. The process to make an HTTP demand from your Python script is different from just how you access a web page from your internet browser. Just because you can log in to the page with your internet browser doesn't mean you'll Have a peek at this website have the ability to scuff it with your Python script. In situation you ever get shed in a large pile of HTML, keep in mind that you can always return to your browser and use the developer devices to further explore the HTML framework interactively. When You Take Another Look At The Code You Utilized To Pick The Items, You'll See That That's What You Targeted You Filtered For Only The

Then you may need extra scripts or a different tool to integrate the scratched information with the rest of your IT facilities. The globe of internet scratching is built around a rather varied landscape. WebScraper is among one of the most popular Chrome scraper expansions. Report: 62% of retailers' cybersecurity incidents come from ... - VentureBeat

Report: 62% of retailers' cybersecurity incidents come from .... Posted: Mon, 19 Dec 2022 08:00:00 GMT [source] URLs can hold more info than just the area of a documents. Some web sites utilize question parameters to encode worths that you send when doing a search. You can think of them as question strings that you send out to the data source to fetch particular records. Internet scratching is the process of collecting https://charlieermd232.jigsy.com/entries/general/8-factors-for-etl-automation-blog details from the Net. Also copying and pasting the verses of your favorite song is a type of web scraping! Learna

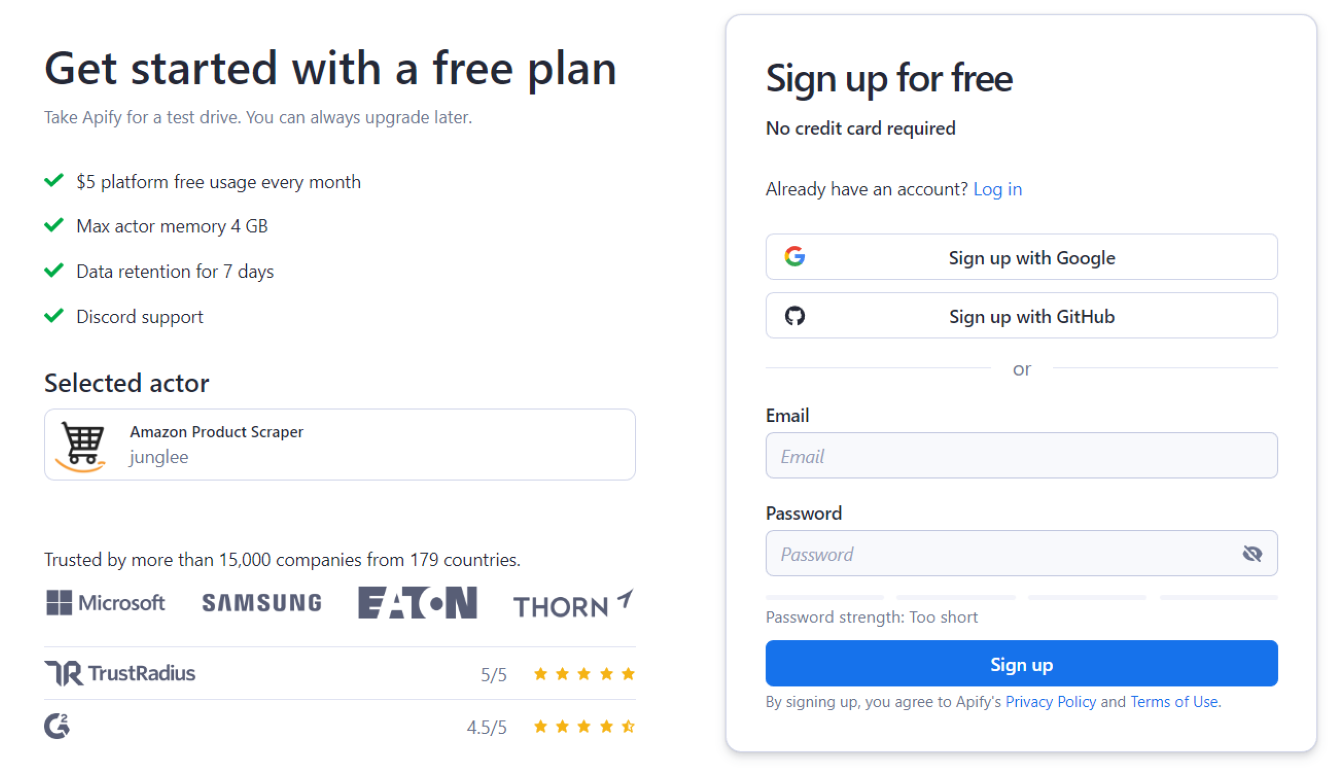

Developers can navigate between various blocks of code simply with this language. Internet Scraper has a point-and-click interface to make sure simple internet scratching. Parsehub's complimentary variation has a limit of 5 tasks with 200 pages per run. With a paid membership, you get upto 120 exclusive projects with endless web pages per crawl and IP turning. Cheerio is a collection that analyzes and manipulates HTML as well as XML records.

Below emergency room create the item and also follow this link reveal the result of internet scraping. Comply with the exact process to produce as lots of data elements as required. Low maintenance is needed as automated web scrapers need virtually no maintenance over time. Once you have composed your Python manuscript, you can run it from the command line. This will certainly start the spider and start extracting information from the internet site.

With such a multitude, it, sadly, is not constantly simple to swiftly find the appropriate device for your really own usage case and to make the appropriate selection. That's specifically what we want to check out in today's short article. Roberta Aukstikalnyte is an Elderly Web Content Manager at Oxylabs.

0 Comments

Leave a Reply. |

Archives

December 2023

Categories |

RSS Feed

RSS Feed