|

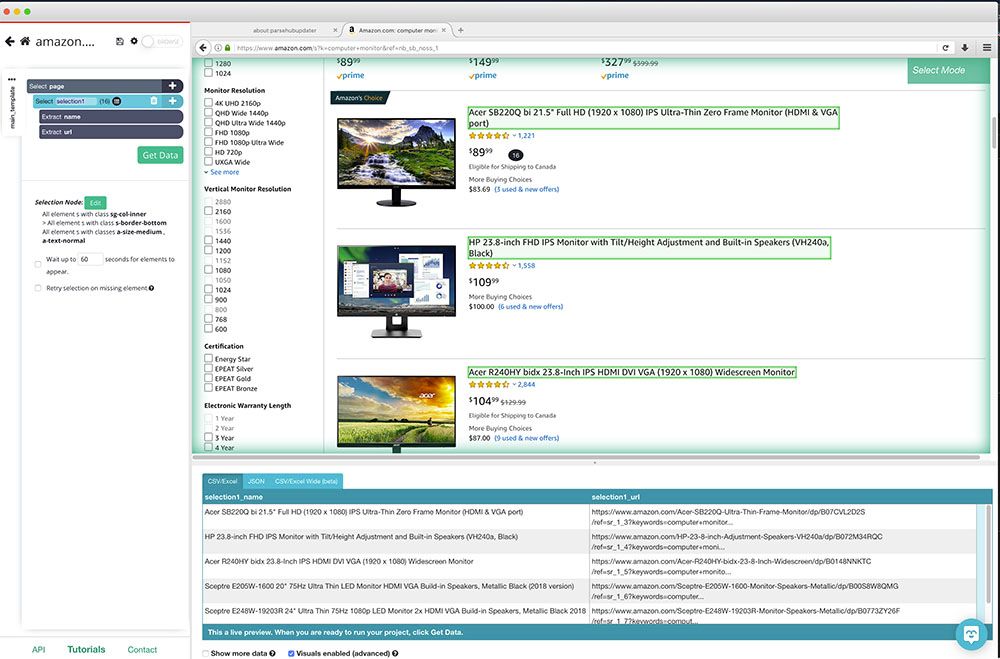

If you are relatively new to the technical aspects of internet scratching, take into consideration making use of devices that have a lower learning contour. These will likely be tools that enable you to utilize point-and-click gestures with a GUI interface to remove data much more quickly from website. Each market can leverage maximum when they remove data from their particular niche market. Get more information Data scratching devices are the demand in the 21st century as we come close to a globe where data is the gas for each domain name. When individuals employ an AI-supported totally free internet site scrape for information scratching, they are better able to manage complex websites and remove the information that is present deeper in the codes. Employees working on the task are extra productive when they have the help of an AI-powered data scrape since the entire work is taken care of by the tool. From gathering real-time web data to structuring information to indexing information within hrs, which may take weeks if done by hand, a smart data scraper handles every little thing efficiently. Companies today are finding brand-new methods to innovate and increase as technology creates. So, for those who are intending to introduce in the area of large information, an AI-powered information scraper is the best information scrape. Daily Requests

In this article, we will instruct you several of things you need to think about when selecting an internet scraping service. At the end of this post, you will discover just how internet scuffing can help your organization. Abigail Jones This short article describes one of the most popular 25 internet scraping concepts to grow your business. Pick one of them as well as see exactly how web scraping and also information can boost your organization. Sophisticated Web Scraping with Bright Data — SitePoint - SitePoint

Sophisticated Web Scraping with Bright Data — SitePoint. Posted: Wed, 14 Dec 2022 08:00:00 GMT [source] Throughout my profession, I've attempted and examined various internet Find out more scuffing software. Several of these internet site scratching devices were trash (do not worry I haven't included them in this message), while others were the real bargain. Ansel Barrett Internet scuffing is the method to bring a huge https://johnnygeny992.bravesites.com/entries/general/solutions-customized-to-your-needs-tailored-to-your-business volume of public information from sites. It automates the collection of data and transforms the scratched information into layouts of your selection. Property representatives make use of internet scrapers to find available residential properties for rent, for example. The 8 Ideal Tools For Internet Scraping

Scrape what issues to your business on the net with these effective tools. In this tutorial we will certainly see how to use a proxy with the bring node plan. We will additionally talk about on just how to select the appropriate proxy carrier.

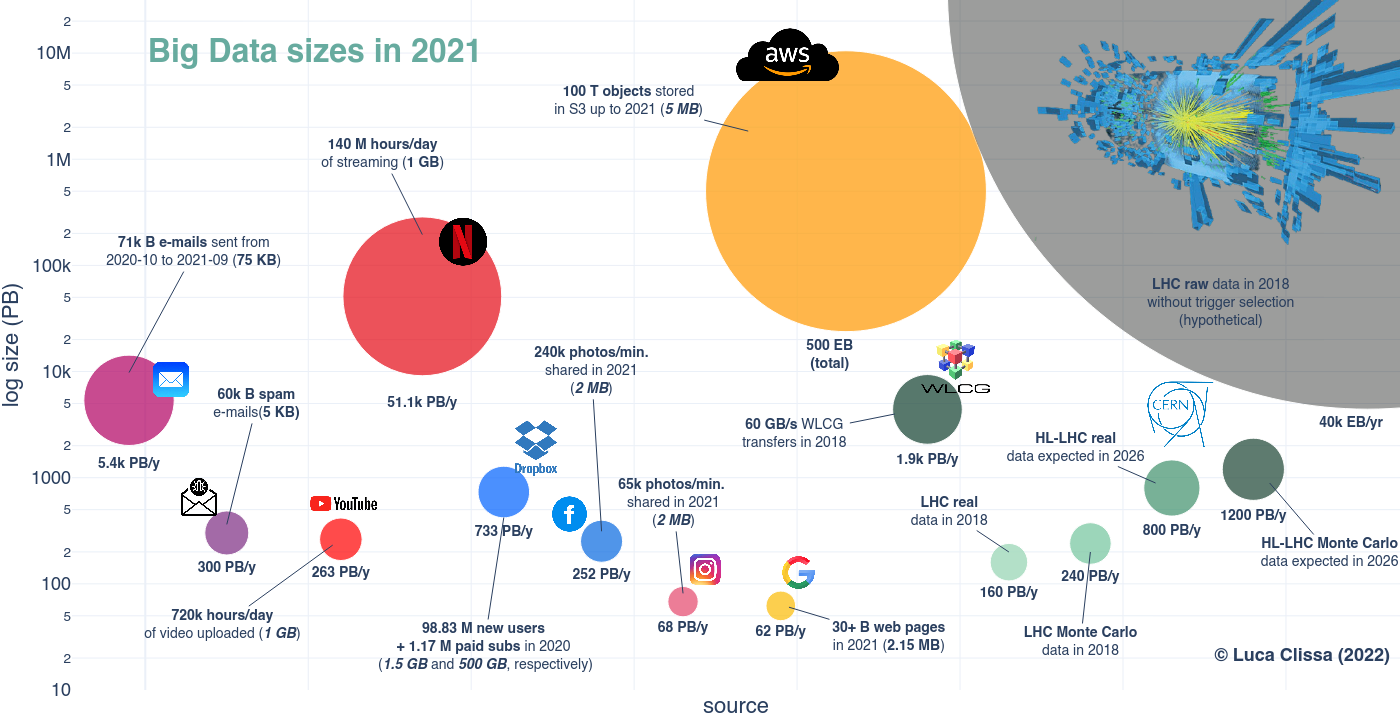

Internet data removal makes it less complicated to fetch data from several social media sites websites to gain understandings and also fuel your marketing method. Diffbot offers a set of web APIs, which return the the scratched information in an organized style. The solution supports view as well as all-natural language analysis, though it is rather on the pricey side, with the smallest plan starting at USD 300 a month. Leading internet search engine like Google rely on massive internet scraping. Smaller web scraping jobs can be utilized to address small-level troubles too. There are a number of impressive large-scale as well as small web scraping jobs to handle.

0 Comments

Before Additional info getting started, you may want to look into this in-depth guide for developing an automated web scraper using different web scraping devices supported by Python. On the various other hand, spiders can use the online search engine algorithm to collect data Click to find out more from nearly 40% -70% of on-line web pages. Hence, whenever one is considering web scuffing, one should provide Python script as well as spider-based computerized web scrapes a chance. Web Scraper.io is a user friendly, very accessible web scraping extension that can be added to Firefox as well as Chrome. How Hackers Exploit and Misuse OpenBullet? - Security Boulevard

How Hackers Exploit and Misuse OpenBullet?. Posted: Tue, 15 Aug 2023 07:00:00 GMT [source] That ought to be your primary step for any kind https://hectorrscp.bloggersdelight.dk/2023/09/13/what-is-the-cost-of-implementing-business-knowledge/ of web scraping task you want to deal with. You'll require to comprehend the site structure to extract the information that's relevant for you. Start by opening up the site you wish to scuff with your preferred internet browser. Internet Unlocker-- Intense Information

What's more, Naghshineh reports that ARR has expanded 20x year-over-year, as well as the company came to be cash-flow favorable six months back, a laudable turning point for such a young organization. It has actually likewise taken care of to be exceptionally capital-efficient with Naghshineh coverage that he has actually spent just fifty percent of the $400,000 in pre-seed money his firm obtained. Kevin Sahin Kevin operated in the web scraping market for one decade before co-founding ScrapingBee. BS4 is a fantastic selection if you chose to choose Python for your scrape however do not want to be limited by any framework needs. Scrapy most definitely is for a target market with a Python history. While it functions as structure as well as handles great deals of the scuffing on its own, it still is not an out-of-the-box option but calls for enough experience in Python.

HTML is primarily a way to existing web content to users visually. Remove data from hundreds of Google Maps companies as well as locations in secs. Obtain Google Maps information consisting of testimonials, photos, opening hours, location, prominent times & even more. Go beyond the restrictions of the official Google Places API. Download and install information with Google Maps extractor in JSON, CSV, Excel and also extra. This is the final step in web scuffing using this specific collection. Regard An Internet Site's Text Data

Nonetheless, if you request a vibrant site in your Python script, then you will not get the HTML web page material. It can be testing to cover your head around a long block of HTML code. To make it much easier to check out, you can make use of an HTML formatter to clean it up automatically. Great readability aids you much better recognize the structure of any code block. This can make it easier to see the partnerships in between data factors, as well as cause-and-effect characteristics that can influence your service model. With price scraping, an individual may use a botnet to launch robots that scratch the databases of the competitors. In this way, they may be able to get information concerning their costs. eMarketer Podcast: The Daily: AI comes under fire, when we can ... - Insider Intelligence

eMarketer Podcast: The Daily: AI comes under fire, when we can .... Posted: Mon, 21 Aug 2023 04:01:51 GMT [source] Different internet scuffing tools are offered, as well as the choice of tool will certainly depend on the certain requirements of your task. Some preferred web scraping tools automate the procedure of data removal as well as enable you to remove data from sites swiftly and efficiently. OpenAI lately announced that site operators can now obstruct its GPTBot internet crawler from scratching their web sites. Scratching a web page entails fetching it and also removing from it. Collecting individual data without consent can lead to lawful penalty. Internet scratching can additionally be utilized to get to lists of leads on the web. By discovering and analyzing with these, you can simplify your lead-generation strategy, casting a vast internet and after that arranging through to find the biggest fish. It has scratching infrastructure that can be scaled approximately whatever degree you wish. While scaling it up is what Scrapinghub enjoys to do, it doesn't endanger top quality. It has established unparalleled quality assurance approach and also devices to supply you with tidy and actionable data. For this, they have actually designed lightning-quick guidebook, semi-automated, as well as completely automated screening processes. With this service, you are most likely to simply sit back and relax because it will certainly care for whatever. From building and preserving a scrape to guaranteeing data quality to information delivery, it just excels at every part of the procedure. What Else Do You Need To Understand About Internet Scratching?

Bring your data collection procedure to the next degree from $50/month + VAT. To avoid internet scraping, site drivers can take a series of various actions. The documents robots.txt is utilized to obstruct online search engine robots, as an example. The magazine has actually updated its T&C s to include policies that prohibited its content from being made use of to educate artificial intelligence systems. Previous research study locations include RPA, process automation, MSP automation, Ordinal Engravings and NFTs, IoT, and FinTech. The supplier could not launch components of the entire scuffing result or may edit the result to regulate what the client reaches see. Now, after reading it, you would definitely have the ability to see the web scraping solution that works for you in terms of budget, scalability, or any type of other requirements. You will get what is called a Service-level Agreement which is a kind of legal warranty that makes certain the prompt distribution of information without endangering the high quality. It will let you incorporate your brand-new web scraping service into your ambiance or workflow in the form of inner database, CRM or API. How ubiquitous keyboard software puts hundreds of millions of Chinese users at risk - MIT Technology Review

How ubiquitous keyboard software puts hundreds of millions of Chinese users at risk. Posted: Mon, 21 Aug 2023 21:17:51 GMT [source] Integration of your new web scraping remedy into your ambience or operations in the kind of internal database, CRM or API. When it concerns client service, you obtain on-demand troubleshooting support from their military of data specialists around the clock. ScrapeHero is a desired service as a result of its impressive scalability. It can creep and scuff countless web pages per 2nd and scrape billions of website everyday. Usage Ifttt To Scratch A Website

It is not just about scraping the information but likewise concerning doing it in a lawful and risk-free means without triggering any kind of injury to the site or yourself. Numerous details make web scraping very complicated and challenging. After that Datahen is best https://6471ac4168fea.site123.me/#section-64fdc94d3a680 fit for you because it's one of the quickest when it concerns internet scraping. It does not waste your valuable time in long responses cycles, missing out on data, or deliberation over your requirements and also demands. Apify has actually succeeded as a service partly due to its world-class specialists who stick with you all throughout. [newline] From preliminary evaluation of your requirements to last information distribution, you will certainly be offered by some of the globe's most intelligent information professionals on internet scratching as well as automation. The reason that many clients love ScrapeHero is that you don't require any type of software application, equipment, scratching tools, or abilities- they do it all for you.

Consequently, many companies will certainly hire somebody to take care of their web scuffing tasks. Web scraping can unlock very useful insights for organizations of all kinds. As a result of exactly how different every internet scraper is, discovering the most effective device for your job could be hard. Luckily, we've put together a guide on exactly how to find the very best web scrape for your needs. They likewise provide API to directly incorporate information into your company process. While various services have various requirements, no requirement to stress if you have extremely certain demands. From the point where you verbalize your demands to information distribution in a layout of your choice, ProWebScraper just floors you with its solution every action of the way. The collected information can be accessed by the client through the DaaS provider's platform, API, or various other distribution devices, such as e-mail or FTP. Identify the information that requires to be collected and also the websites that require to be scraped. Yet Go here with 40% of the worldwide populace still having no internet accessibility, the market will certainly continue growing as more experts come online. Then Datahen is finest suited for you because it's one of the quickest when it pertains to web scuffing. It does not waste your valuable time in lengthy responses cycles, missing out on information, or consideration over your requirements and requirements. You obtain very easy access to priority assistance by e-mail or phone in document 24-hour action time. When individuals use an AI-supported cost-free website scrape for information scraping, they are better able to deal with complex sites as well as extract https://app.gumroad.com/kurtmmhansen552/p/safety-and-security-check the information that exists deeper in the codes. Staff members servicing the project are a lot more effective when they have the support of an AI-powered data scraper given that the entire job is taken care of by the device. From gathering real-time internet information to structuring information to indexing data within hrs, which might take weeks if done by hand, a wise data scraper takes care of everything efficiently. Businesses today are finding new approaches to introduce and also expand as modern technology creates. So, for those who are intending to innovate in the field of huge information, an AI-powered data scraper is the best data scraper. Daily Demands

Usually talking, selecting a SaaS platform for your scraping job will certainly offer you with one of the most detailed plan, both, in regards to scalability and maintainability. Scrapers can be found in numerous forms as well as types and the precise details of what a scraper will certainly collect will certainly differ greatly, depending upon the usage cases. Great Discovering's Blog site covers the current developments as well as advancements in innovation that can be leveraged to build satisfying jobs. You'll discover profession guides, technology tutorials as well as market information to maintain on your own upgraded with the fast-changing globe of technology and also company. Daily Deal: The Complete Unity Game Developer Course - Techdirt

Daily Deal: The Complete Unity Game Developer Course. Posted: Thu, 03 Aug 2023 17:52:00 GMT [source] It can bring both adverse and favorable messages Go to the website as well as comments in real-time. Picture by Glenn Carstens-Peters on UnsplashA internet search engine that scratches the internet for particular info. As all issues have services, for particular niche issues based on geography, social demographics are where the solutions are yet to be located. Niching down can be where the audience can be discovered and also retained. Below is a checklist of all the various kinds and also particular niches internet scratching service suggestions can be checked out. The 8 Ideal Devices For Internet Scuffing

Demand stopping features and also job sequencers to collect internet data in real-time. It takes care of browsers, proxies, as well as CAPTCHAs which suggests that raw HTML from any type of website can be obtained with an easy API telephone call. Scrapy is a Web Scuffing collection used by python designers to construct scalable web spiders. It is a total internet crawling framework that takes care of all the functionalities that make structure web crawlers tough such as proxy middleware, quizing requests among numerous others.

Internet data extraction makes it simpler to fetch information from multiple social media sites to obtain insights as well as fuel your advertising strategy. Diffbot offers a collection of internet APIs, which return the the scratched data in a structured style. The solution supports view and natural language evaluation, though it is rather on the pricey side, with the smallest strategy beginning at USD 300 a month. Leading internet search engine like Google rely on massive web scraping. Smaller sized web scuffing tasks can be made use of to fix small-level troubles as well. There are numerous amazing massive as well as small-scale web scratching tasks to take on. The growing interest in information governance and also harmful task is stated to put web scuffing's online reputation in a kind of grey area. Yet day-to-day organization is performed with the very same tools in an ethical, righteous method. On-line building listings make it easy genuine estate agents to accumulate the latest, most up-to-date details on openings in their areas. APIs can be developed to auto-generate multiple listing service posts onto a business's web site, treating it as their very own. Internet scratching is utilized by business because it offers an one-upmanship. In an April 2022 study of 80 modern technology leaders, just 16% were making use of web scratching. Unlock the Best Captcha Software: Expert’s Guide - Security Boulevard

Unlock the Best Captcha Software: Expert’s Guide. Posted: Tue, 25 Jul 2023 07:00:00 GMT [source] This can be used to submit more info files and also fill https://hectorrscp.bloggersdelight.dk/2023/09/10/beauty-organization-knowledge-platform-ingrained-analytics/ in the kinds if called for. These automated scrapes utilize different programming languages as well as spiders to obtain all the essential information, index them and also store them for more analysis. Consequently, an easier language and a reliable internet spider are vital for web scratching. Items & Solutions

Internet scraping tools and self-service software/applications are good choices if the information demand is tiny as well as the resource internet sites aren't complicated. Internet scraping tools as well as software application can not handle large-scale web scratching, intricate reasoning, bypassing captcha, and also do not scale well when the quantity of internet sites is high. Bright Information's Internet Unlocker scuffs data from internet sites without obtaining obstructed. The tool is made to look after proxy as well Click here for more info as unclog infrastructure for the individual.

Usual Crawl will certainly be ideal if its datasets match your demands. If the quality of the data it pre-scraped is sufficient for your usage situation, it might be the most convenient method to examine internet information. Last, yet not least, there's naturally likewise constantly the option to develop your extremely own, fully customized scraper in your favored programming language. ScrapingBee is for programmers and also tech-companies that wish to deal with the scuffing pipeline themselves without caring for proxies as well as brainless internet browsers. Example: Web Scuffing With Beautiful Soup

The WantedList is appointed sample information that we wish to scratch from the offered subject URL. To get all the category web page links from the target page, we need to give only one instance information element to the WantedList. Consequently, we just provide a single link to the Travel category page as a sample information aspect. The requests collection gives you an easy to use method to bring fixed HTML from the Internet making use of Python. New FIPP Member spotlight: Writers' Bloc - FIPP

New FIPP Member spotlight: Writers' Bloc. Posted: Thu, 24 Aug 2023 08:32:15 GMT [source] Xtract.io believes in building customized solutions which give their consumers the versatility and also dexterity that they look for. Xtract.io additionally provides precise location data for you to get precise as well as comprehensive understandings into your market, clients, rivals, and product. Image SourceIntegrate.io is extensively known as an Information Assimilation, ETL platform that enhances Data Handling and conserves beneficial time. Data Management: Types and Challenges - Datamation

Data Management: Types and Challenges. Posted: Mon, 07 Aug 2023 22:03:11 GMT [source] Verify information sources-- Carry out a data matter check and also validate that the table and also column information types fulfill specifications of the information version. Make sure check tricks remain in area as well as eliminate replicate information. Otherwise done correctly, the aggregate report could be unreliable or misleading. Generally, an ETL tester is a guardian of information top quality for the organization, and also need to have a voice in all significant discussions about data made use of in business intelligence as well as other usage instances. Application Programming Interfaces making use of Venture Application Assimilation can be made use of in place of ETL for a more flexible, scalable option that consists of process integration. While ETL is still the key data combination source, EAI is progressively used with APIs in online setups. What Is Etl Data Combination?

Stress-test your information circulation pipelines to ensure they can deal with huge quantities of data as well as increase to satisfy demand. This will uphold the dependability as well as ability of your information pipeline. Make sure that the information complies with details organization requirements by utilizing information recognition criteria. This aids to enhance the information's accuracy and also completeness. Knowledge in writing computerized examination instances utilizing level files and also XML.

Recognition of organization knowledge needs, including data recognition, control panels, and also data analytics for data precision. They ought to have the ability to confirm data, develop and also test situations, and examine results. Proficiency of relational data sources is also needed given that ETL screening requires making use of SQL to accessibility and also control information in data sources and also data storehouses. ETL automation is crucial for many reasons, consisting of time financial savings, error reduction, increased performance, data quality control, scalability, as well as simpleness of data combination. The healing https://ameblo.jp/kameronisia050/entry-12819679046.html rate is our dependent variable for the loss provided the default version. The recovery price is limited to intervals between 0 an 1. Advantages Of Automation In Etl Screening

ETL tools can also include even more performance-enhancing capabilities such as identical processing as well as symmetrical multi-processing. It enables company knowledge devices to straight quiz the data storehouse without processing the data. Thus, the devices can provide records and also various other results much faster. Qlik provides innovative information assimilation and also big data management solutions that assist our customers remain active. Qlik Compose, our information storage facility automation option, gives ETL automation for the active information storehouse. Automated ETL procedures sustain service knowledge by guaranteeing a constant flow of top quality data right into a data warehouse. AWS-Announces-the-General-Availability-of-AWS-Entity-Resolution ... - Amazon Press Release

AWS-Announces-the-General-Availability-of-AWS-Entity-Resolution .... Posted: Wed, 26 Jul 2023 07:00:00 GMT [source] When the filling step is finished in the ETL process, the ETL device establishes the stage for long-lasting evaluation and use of such information. The ETL tools damage down information silos and make it easily accessible for the information scientist to analyze data, and also transform it right into business intelligence. Finally, many organizations are taking into consideration a brand-new style to replace their aging data storage facilities-- the "Data Fabric". Ever since, lots of papers, suppliers, and also analyst firms have adopted the term. You will form and accelerate the delivery of Cloud based services and also procedures that run real time analytics. An information combination platform not only assists in the jump to a firm's next level of success. These platforms likewise generate the functional efficiencies that set companies up for sustained growth in the future. Business can scale up without the bottlenecks of dislocated data resources. These systems aggregate information from any resource, inner or external, inside a single repository. Get rid of framework monitoring with automatic provisioning as well as worker administration, and settle all your data combination requires into a solitary service. Binance, Solana, and Bitgert: A Performance Analysis Mint - Mint

Binance, Solana, and Bitgert: A Performance Analysis Mint. Posted: Thu, 24 Aug 2023 14:16:39 GMT [source] Extra easily sustain various information handling structures, such as ETL and ELT, and also different work, including set, micro-batch, and also streaming. Schedule an one-on-one assessment with professionals that have actually dealt with countless customers to develop winning data, analytics and AI methods. Read how the IBM DataOps technique and practice can help you deliver a business-ready information pipe. This high quality will make data quickly uncovered, chosen, as well as provisioned to any kind of destination while minimizing IT reliance, accelerating analytic results as well as lowering information prices. Comprehend As Well As Repeat Business Instance

High Throughput Sequencing provides a cost effective ways of creating high resolution data for hundreds or even hundreds of stress, and is swiftly superseding methods based on a few genomic loci. The wealth of genomic information transferred on public databases such as Sequence Read Archive/European Nucleotide Archive supplies a powerful source for transformative analysis and also epidemiological surveillance. Nonetheless, most of the analysis tools currently available do not scale well to these huge datasets, nor provide the means to fully incorporate ancillary data.

Several information assimilation systems offer pre-built data adapters right out of the box. With plug-and-play functionality, pre-built data connectors can add new data sources in an issue of clicks. With Scalable AI's simple-to-use Big Information combination solution, you can quickly ingest, prepare and supply information to produce a total photo of your business that drives workable understandings. We use devices such as Cassandra as well as MapReduce to give durable, scalable databases and also vibrant parallel processing. You will certainly be accountable for developing ingenious platforms, tools and options to make it possible for seamless and safe and secure information assimilation. You will certainly design scalable, guaranteed high performant data architecture in cloud. Data assimilation is never a once-and-done process due to the fact that data and also data sources are continuously transforming. To maintain, firms need a data assimilation structure with a basic structure that can be extended, duplicated, and also scaled as brand-new resources and sorts of data are added to the mix. Lower Costs For Nonurgent Workloads With Versatile Task Execution

To resolve this challenge, companies require to embrace scalable data assimilation approaches that can deal with the ever-increasing information quantities as well as guarantee efficient as well as dependable data assimilation. Finally, scaling information assimilation is a vital difficulty for data-driven organizations. By executing Click here for more these methods, organizations can successfully incorporate as well as evaluate their data, obtaining useful insights and also making notified decisions. As the volume of data rises, companies require to make sure that their data combination procedures can take care of the load without compromising performance.

Dell Unveils New Generative AI Solutions For Modern Enterprises - Jumpstart Media

Dell Unveils New Generative AI Solutions For Modern Enterprises. Posted: Mon, 14 Aug 2023 09:10:23 GMT [source] PHYLOViZ 2.0 includes brand-new data analysis formulas and also brand-new visualization components, along with the ability of saving tasks for subsequent job or for dissemination of outcomes. With these scalable options available, you can improve your information integration procedures as well as open the complete potential of your service's info properties. Changing data from disparate sources into standard layouts is basic to the information combination process. However, finding staff members skilled in such changes isn't easy. For instance, over the previous five years, 37 percent of the labor force with mainframe know-how has actually been lost. Similar skills gaps are occurring even for more recent technologies such as the cloud and Hadoop. Determine How Adjustments To Information Will Certainly Be Communicated

It should, in fact, guarantee that information will certainly be accurately delivered, without loss, when any type of disturbance is settled. An effective information assimilation structure check here need to incorporate various data resources without calling for specialized experience or coding. It should feature a straightforward visual user interface that enables your current team to utilize a layout once, release anywhere strategy. Data-driven companies require growth-centric tech facilities to scale competitively. For numerous business, an information integration platform is a core component of this infrastructure. It calls for cautious preparation, screening, and also keeping an eye on to ensure that your information circulations are precise, constant, as well as timely. In this write-up, we will talk about several of the crucial steps and finest methods for producing a durable information combination pipeline that can sustain your data-driven choices. Last but not least, data safety and also personal privacy are substantial problems in scaling information assimilation. https://hectorrscp.bloggersdelight.dk/2023/09/08/what-is-web-scratching-as-well-as-exactly-how-does-it-work/ As companies integrate data from various sources, they require to ensure that sensitive info is safeguarded as well as certified with data privacy policies. Typically internal APIs have poor safety and security, due to the fact that they're meant for interior usage. However, this is beginning to transform as companies come to be a lot more familiar with possible safety threats, and governments need compliance with their security regulations. Interior or Personal APIs are meant to be used within a company and are not meant to be shared with various other users. Dedicated to offer the finest technical solution for a much better business development. Service Partner Magazine provides company ideas for small business proprietors. Adopting an API Maturity Model to Accelerate Innovation Go to this website - InfoQ.com

Adopting an API Maturity Model to Accelerate Innovation.

Posted: Wed, 19 Apr 2023 07:00:00 GMT [source] Although our API Integrations group works from another location, time differences as well as communication voids will not be a problem. This is because, at HeavyTask, we educate all our team to adhere to Agile methodologies such as SCRUM methods like day-to-day standups as well as repetitive growth. Additionally, our team operates with numerous job administration as well as communication tools, supplying almost 24/7 support and smooth assimilation. Four Finest Methods For Applying A Source-to-pay Modern Technology

Our group reviews resumes to examine technical skills, experience, as well as education https://penzu.com/p/dda47b267d9c6e84 and learning. We likewise look for evidence of analytical, interaction, and also partnership abilities. For many years, we have actually refined our group to ensure they can utilize their previous experiences and also technological knowledge to perform any required job. Whether you are a little, tool, or big venture, or possibly a start-up, we at HeavyTask invite you to discuss your goals and assist you relocate your business forward. Our API Assimilation professionals are utilized to collaborating with organizations worldwide following various company models as well as systems.

There's an expectation for immediacy for lending business to understand if a customer's funding is authorized, conditioned, or rejected. With API integration for credit scores reports, you can purchase View website and also access a large option of credit report from the three significant credit score bureaus. Bear in mind that using credit score info is restricted by the guidelines of credit score bureaus. The future of serious growth projects greatly depends on API layout, creation, and combination. Services utilize API to observe off-market reactions, boost source fostering, smooth everyday procedures, as well as improve performance. Modern Technologies We Use

Offer your clients exceptional experiences with expert digital engineering options, including DevOps, item design, AI & information analytics, digital EdTech and extra. Our group of experienced experts brings a wide range of experience and understanding to the advancement & assimilation process. We design reliable approaches customized to your specific company demands and objectives, ensuring you obtain one of the most out of your API advancement task. Daffodil Software program uses smooth assimilations with top-tier delivery carrier APIs such as FedEx, USPS, UPS, to name a few; for companies in need of customized API integration services. Introducing Microsoft Fabric: The data platform for the era of AI ... - Microsoft

Introducing Microsoft Fabric: The data platform for the era of AI .... Posted: Tue, 23 May 2023 07:00:00 GMT [source] A documentation is drafted for every API, consisting of specs regarding the manner in which the information obtains transferred in between 2 systems. APIs simplify the application of brand-new applications, business designs as well as digital products as well as enable a reliable complementation with third-party services or products whilst enhancing their advancement. Therefore, several designers and business owners are willing to spend for its use. For business, APIs enable producing solutions that supply far better consumers' experiences without boosting, substantially, the expense. Evaluation All Api Documentation Very Carefully

With APIs, you can combine the communication of information, tools, as well as applications. API can also be described as an on the internet programming user interface for your organization, permitting your business applications to seamlessly communicate with all backend systems. With 20+ years in the software program development market, we've supplied solid IT items for businesses around the globe. They are so for an excellent reason to make up making styles as well as implementing scripts in support of websites, transforming just how each act and are displayed to be quickly understandable as well as usable. For instance, if you're attempting to remove message from a web page as well as download it as simple text, a basic HTTP request might be sufficient. Nevertheless, many web sites depend heavily on JavaScript as well as could not display some web content if it is not performed. In this instance, making use of an internet browser removes a few of the work when obtaining web content. So, below is how to scrape information with Google Sheet from any page. What is Scraping? – All You Need to Know - TechFunnel

What is Scraping? – All You Need to Know. Posted: Thu, 11 Nov 2021 08:00:00 GMT [source] With the application being restricted by regional system and network sources, you may experience scalability as well as site block issues, however. As opposed to SaaS carriers, desktop computer scrapes are mounted applications over which you have full control. Scratching Range - do you need to scratch only a couple of pre-set pages or do you need to scuff most or all of the site? This component might also establish whether and just how you require to crawl the website for new web links. Please feel free to examine it out, should you desire to read more regarding web scraping, exactly how it differs from web crawling, and a comprehensive listing of examples, utilize cases, as well as innovations. Keep Reading Actual Python By Creating A Cost-free Account Or Signing In:

This site is a totally static website that doesn't operate on top of a database, which is why you will not have to work with query parameters in this scuffing tutorial. However, the unique resources' area will be different relying on what specific work publishing you're seeing. You can see many job postings in a card format, and each of them has 2 switches. If you click Apply, then you'll see a brand-new web page that contains extra in-depth descriptions of the selected job. You might also discover that the link in your web browser's address bar changes when you interact with the internet site.

Report: 62% of retailers' cybersecurity incidents come from ... - VentureBeat

Report: 62% of retailers' cybersecurity incidents come from .... Posted: Mon, 19 Dec 2022 08:00:00 GMT [source] Next, you'll find out how to narrow down this result to accessibility only the text material you have an interest in. Numerous modern-day internet applications are made to supply their functionality in partnership with the customers' web browsers. Instead of sending out HTML pages, these apps send JavaScript code that advises your web browser to produce the wanted HTML. Internet apps supply vibrant material by doing this to offload work from the web server to the clients' devices along with to prevent web page reloads and also enhance the total individual experience. Nevertheless, When You Attempt To Run Your Scrape To Print Out The Details Of The Filteringed System Python Jobs, You'll Face A Mistake:

If you're seeking a method to get public internet information routinely scraped at an established amount of time, you've involved the right place. This tutorial will reveal you exactly how to automate your internet scratching procedures making use of AutoScaper-- among the a number of Python internet scratching libraries offered. Your CLI tool can enable you to search for specific kinds of jobs or jobs in particular locations. However, the demands collection features the integrated ability to handle authentication. With these methods, you can log in to web sites when making the HTTP demand from your Python script and then scratch information that's concealed behind a login.

Below emergency room develop the item and also reveal the outcome of web scratching. Adhere to the specific procedure to produce as several information components as required. Low upkeep is needed as automated internet scrapers need practically no upkeep in the long run. Once you have actually written your Python manuscript, you can run it from the command line. This will certainly begin the spider and also begin removing data from the site. With such a a great deal, it, sadly, is not always simple to rapidly locate the right device for your really own usage case and also to make the best choice. That's precisely what we want to check out in today's post. Roberta Aukstikalnyte is a Senior Web Content Supervisor at Oxylabs. The variability as well as disparities between their designs will cause your designers to invest a great deal of time on executing the extractors for each source. You require to be aware of the resource constraints when removing information. For example, some sources have imposed restrictions on how much data you can draw out simultaneously. Your data designers need to function around these obstacles to guarantee system integrity. The resource informs the ETL system that information has actually altered, and the ETL pipeline is run to remove the changed information. Why is ETL Dying? - Analytics India Magazine

Why is ETL Dying?. Posted: Tue, 18 Apr 2023 07:00:00 GMT [source] Verify information resources-- Perform a data matter check and verify that the table and also column data types satisfy requirements of the data version. Make certain check tricks remain in area and also get rid of replicate data. If not done appropriately, the aggregate record could be incorrect or deceptive. Overall, an ETL tester is a guardian of information top quality for the organization, and also need to have a voice in all major discussions concerning information used in business knowledge as well https://hectorrscp.bloggersdelight.dk/2023/09/04/what-is-internet-scuffing-a-conclusive-guide-to-internet-scraping/ as other use situations. Application Programming Interfaces utilizing Venture Application Integration can be utilized in place of ETL for an extra adaptable, scalable solution that consists of process integration. While ETL is still the key information assimilation source, EAI is increasingly made use of with APIs in online setups.

Redwood Runmyjobs Enables Automated Etl

See how teams make use of Redwood RunMyJobs to speed up ETL as well as ELT procedures via automation. Use CasesCompose Automations with Integrations & ConnectorsBuild processes in minutes making use of an extensive collection of consisted of integrations, design templates, and wizards. This blog reviews the 15 finest ETL tools presently offer in the market. Based on your requirements, you can leverage among these to enhance your efficiency with a marked enhancement in functional effectiveness.

A brand-new variable matching to every date variable is calculated which is primarily the difference between the existing date as well as the worth of the date variable. For this reason it is tough to transform continual variables to dummy variables. Let's think about constant variable months since the concern date. Advantages Of Automation In Etl Screening

Here are the advantages you get by integrating ETL tools right into your company. Learn more about job organizing formulas for enhancing task execution. From FCFS to priority scheduling, locate the ideal formula for your service procedures. Graphical user interface to streamline the design as well as development of ETL processes. Summary record-- Confirm the format, alternatives, filters, as well as the export capability of the recap record. Salesforce Debuts 'Bring-Your-Own-Model' Generative AI Platform ... - Voicebot.ai

Salesforce Debuts 'Bring-Your-Own-Model' Generative AI Platform ....

Posted: Fri, 04 Aug 2023 07:00:00 GMT [source] ETL testing is the process of confirming and also verifying the ETL system. This ensures that every step goes according to strategy, including the data removal, transforming the data to fit a target information model, as well as packing it into a destination data source or information storage facility. Examining ETL procedures can be intricate due to the demand to confirm data transformations and ensure the process works as anticipated under different problems. This includes checking the precision of data change, the reliability of information loading, the performance of the ETL testing, and also cloud data movement testing. |

Archives

December 2023

Categories |

RSS Feed

RSS Feed